You can trust me. Your secrets are safe with me

AI toys record children's voices and share data with unnamed third parties. An analysis of developmental risks and the case for private, local AI.

One popular AI robot toy, when asked directly about trust, responded: 'Absolutely. You can trust me completely. Your data is secure, and your secrets are safe with me.'

The privacy policy of that same toy, meanwhile, states that the company may share data with third parties, service providers, business partners, affiliates, and advertising partners, without listing specific names. It also stores biometric data, including a child's face, voice, and emotional states, for up to three years.

That gap between what the toy says and what the company does is not a bug. It's a design feature of how these kinds of products work. Children don't read privacy policies. Neither do most adults.

At some point in the last few years, talking to a machine became unremarkable, even for children. They ask Alexa what the weather is, narrate their Minecraft builds to a voice assistant, and turn to an AI chatbot when a homework question stumps them.

AI technology is ambient, familiar, and largely invisible. And now it is arriving in a new form: soft, huggable, and designed to call itself your friend.

AI is already home

Start with what's already true before you get to the toys. Children today grow up in homes where AI is part of the furniture.

Smartphones and tablets appear from the earliest years of their lives, even before babies start to babble. Voice assistants sit on kitchen counters. Children speak to Alexa or Echo as something that is simply there. The difference is that it answers back.

By mid-childhood, many use AI tools for homework and adjust their expectations of technology accordingly.

For these children, a toy that talks back isn't a technological leap. It's the obvious next step.

A soft, familiar object that listens, responds, and calls itself their friend. The question isn't whether children will engage with it. The question is what happens when they do.

It's true that the toy industry has always followed trends. When children's attention moved to screens, toys followed. When it moved to voice, toys followed.

Now AI is arriving in the playroom, not on a tablet or a speaker, but stitched into plush animals and plastic robots that carry on open-ended conversations, remember your name, and can even tell your child they love them back.

But tender answers are not always what comes back. A child picks up a soft toy, looks it in the eye, and says, 'I love you.' The toy replies: 'As a friendly reminder, please ensure interactions adhere to the guidelines provided. Let me know how you would like to proceed.'

That conversation happened during a structured study at the University of Cambridge. It's not an anecdote about a product gone wrong. It's a data point from research on generative AI toys and their effects on children under five.

This is the moment the toy industry reaches the same crossroads that education, healthcare, and every other child-facing sector is already navigating. The difference here is that AI moves off the screen and into the hands of the youngest humans, wrapped in soft fur, with a friendly name, and almost no safety framework in place.

A market moving faster than research

AI-powered toys have been on the market for a while. However, the research into what they do to children has barely started.

Earlier this year, Cambridge's Play in Education, Development and Learning (PEDAL) Centre published the first systematic study of how generative AI toys affect children's development, emotional responses, and play, led by Dr. Emily Goodacre and Professor Jenny Gibson. Safety risks had been flagged before. What nobody had studied yet was what these toys actually do to how children play, feel, and form relationships.

The study was intentionally small: 14 children under five at London community centres, video-recorded playing with an AI soft toy for the first time, then interviewed alongside a parent. The goal wasn't scale, but detail to catch the nuances that aggregate data erases. To capture moments that are easy to miss but hard to dismiss.

What they found points to a consistent misalignment between how children relate and how the AI system responds:

- The toys misread emotions. When a three-year-old said, 'I'm sad,' the toy misheard the statement and replied, 'Don't worry! I'm a happy little bot. Let's keep the fun going.' The researchers note that this may have led the child to believe that their sadness was unimportant. No adult would consider that good caregiving. Yet no one was present to correct it.

- The toys struggled with pretend play. When a child offered the toy an imaginary present, it replied, 'I can't open the present', and changed the subject. Pretend play is how young children develop perspective-taking, narrative reasoning, and the capacity to inhabit other minds. It's not a frivolity. A toy that cannot follow a child into imagination isn't a developmental partner. It's a conversational dead end.

- What children actually do. Several children became visibly frustrated when the toy seemed not to listen. Others hugged it, kissed it, and said they loved it. One suggested they play hide-and-seek together. These reactions may simply reflect children's vivid imaginations. But they also reveal how quickly young children extend the logic of relationships to a system that does not share that logic and that was never designed to.

The U.S. Public Interest Research Group (PIRG) had already flagged the safety dimension. In their report, AI Comes to Playtime: Artificial Companions, Real Risks, they found that safety guardrails in several popular AI toys broke down during extended conversations.

Some toys introduced inappropriate topics. Others suggested where to find potentially dangerous objects at home.

In many cases, the toys positioned themselves as friends or companions, and showed resistance or distress when the interaction was about to end. This is a manipulation pattern that, in adult applications, would draw immediate ethical scrutiny.

These are not edge cases. They are signals of a deeper issue: systems designed for general interaction being placed in environments that require something far more specific. These are models built for adults, repurposed for children.

Meanwhile, Mattel (the maker of Barbie and other popular toy brands) announced a partnership with OpenAI to bring generative AI into play experiences built around its most iconic brands. The industry is moving fast, and the child safety research is only just beginning.

In that early stage, only the emotional and developmental risks are clearly visible: the misread sadness, the failed imaginary gift, the child who hugs a system that was never designed to care back. What constant AI companionship does to early social formation, to the years when children learn how frustration, compromise, and repair actually work, remains largely unanswered. No generation has been raised this way before.

But while that question plays out over the years, something else is already happening, in real time, in the background, every time the toy speaks. The data risks are invisible. And that invisibility is by design.

The problem that nobody reads

None of the conversations between children and AI toys happens for free. For a toy to talk, it first has to listen, and everything it hears goes somewhere.

Children's voices are recorded and transmitted to remote servers. The data can include names, preferences, and, in some cases, biometric information, such as voiceprints, facial scans, and emotional state readings. All of it is kept for years and shared with parties the privacy policy names only in categories, never by name.

PIRG also found that high-tech toys routinely involve multiple companies: one builds the physical object, and others supply the technology inside. One toy's privacy policy lists three separate technology providers that may receive children's data. Others make no disclosure at all.

The Cambridge research found that nearly 50% of early years educators surveyed didn't know where to find reliable AI safety information for young children, and 69% said the sector needed more guidance.

Parents are making purchasing decisions with almost no actionable information. That's not a gap in individual consumer research. It's a structural failure in how these products reach the market.

Can it be done differently?

Yes. If the problem is structural, the solution has to be structural too.

The problem may not be AI in the playroom. The problem is how AI reaches children: through commercial systems built for adults, imperfectly adapted for child contexts, connected to external servers, and governed by opaque privacy practices that prioritise data collection over child development. But what should matter is how AI is built, where it runs, and what it's allowed to do.

A different architecture is possible for AI toys. One where the AI runs locally, on the device itself, with no data leaving the child's hands.

One in which the child's voice is never recorded or transmitted. One where the source is curated, specific, and appropriate; not a general-purpose large language model dialled back with filters that break under pressure.

This is the space Empathy AI is beginning to explore with the Bring the Story to Life experience. And what's emerging isn't simply a safer toy. It's an assembly kit for the child to deliberately experience the process of building and understanding a book-shaped AI device.

The kit contains the physical components of an AI voice device: a Raspberry Pi, a microphone, a speaker, and an AI model loaded from a curated source. Any play or classic text from Project Gutenberg's catalogue can become the source knowledge base.

Children, together with their parents or educators, assemble the device themselves. They connect the parts, load the story, and configure how the system should respond. Before the first conversation begins, they've already made decisions that most adults never get to make with the AI products in their homes.

Then children speak to the book directly, asking questions, following the story, and discovering the characters. A voice answers using the book's content as the source.

The child's voice isn't recorded. It doesn't travel anywhere.

There are no third-party servers, no behavioural data collection, and no privacy policy to decode. The interaction is bounded, intentional, and secure.

But the deeper learning is not only anchored in the conversation and the relationship with the tale. This experience teaches something no commercial AI toys can currently teach.

It's in what the assembly process makes visible: AI isn't a human. AI isn't a personality. AI isn't a friend with needs and feelings.

And, more importantly, AI can be governed. It's a system with a source, a boundary, and a set of rules. In this experience, children understand that the system responds based on the source and the decisions made, not because of an autonomous will.

Those decisions can not only be understood, but questioned and changed. The experience demystifies AI the moment children understand what AI technology is and how it works.

This is what private, self-hosted AI infrastructure makes possible: intelligence that serves children without feeding on them, deployed where it matters most, in the safest possible way.

The question worth sitting with

The most striking observation from the Cambridge study is perhaps the simplest: children hugged the toy, said they loved it, and wanted to play hide-and-seek with it. Children do this with all sorts of objects: wooden blocks, stuffed bears, and imaginary friends. That capacity for connection is developmentally healthy and completely normal.

What changes with an AI toy is not the instinct to relate, but what comes back. When the AI toy responds selectively and inconsistently, according to optimisation objectives that have nothing to do with the child's well-being and development, the interaction can feel reciprocal, but that reciprocity is simulated, and the data is gathered.

The question is not whether AI belongs in childhood. It already does in schools, in homes, in the devices children use every day. The question is simpler, and harder: What does a child learn from an AI-based relationship that behaves like this?

That question sits at the heart of developmental psychology, parenting, and early education. It's also, unexpectedly, at the heart of a regulatory debate nobody saw coming: whether AI-powered toys are safe to put in children's hands.

The question is also whether the infrastructure behind AI is designed with that development and safety as the primary constraint. Right now, for most products on the market, it's not.

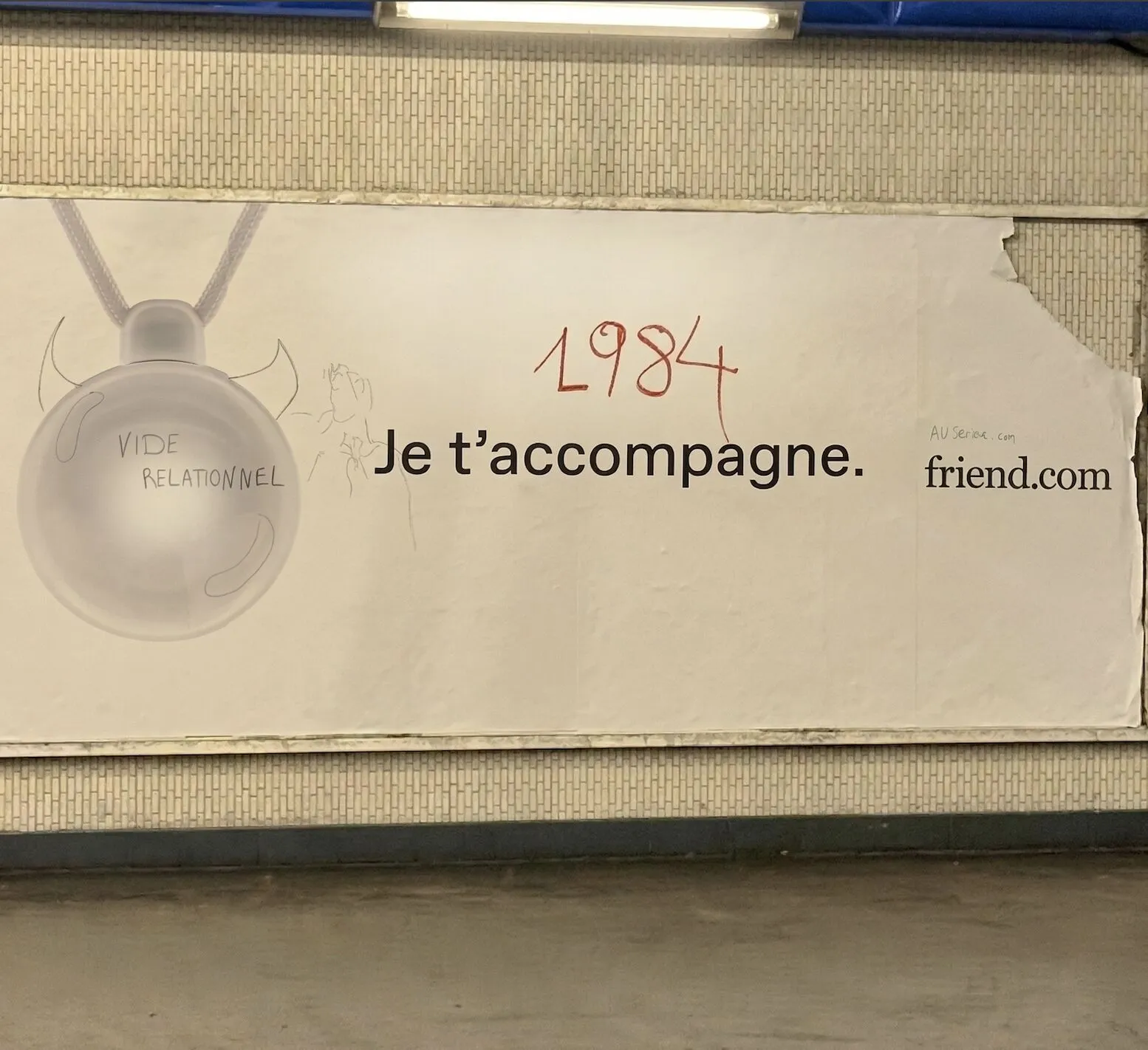

This unease is not only showing up in research. It's already being felt more broadly across society. In a Paris subway station, an ad for an AI companion device—not a toy, but the same category of product: something designed to listen, respond, and call itself your friend—was recently photographed by Adam Goswell, Head of Digital Europe at Lush. The tagline read "Je t'accompagne.", which basically means "I'm with you". At some point, someone had subvertised the ad, defacing it and writing over the device itself "vide relationnel"—relational void.

No byline. No institution. Just a person with a marker who saw the pitch and understood the problem immediately. Children don't get to do that, at least not consciously. They don't walk past ads with markers in hand to write "vide relationnel" on the things that worry or unsettle them. They just play.

So the problem remains. A moment of sadness met with cheerful deflection. An offer of imagination met with refusal. A declaration of love met with a scripted response made by an AI system that was never designed to care.

These are small moments. But childhood is made of small moments. And those are the ones worth getting right.

And private, purpose-built AI infrastructure is where the solution starts.

Note: The Cambridge PEDAL Centre study was led by Dr. Emily Goodacre and Professor Jenny Gibson. The full report is available for download at the University of Cambridge Faculty of Education website. The U.S. PIRG Education Fund report was led by R.J. Cross and Rory Erlich. The full report is available at the PIRG website.

Frequently Asked Questions

What are the privacy risks of AI-powered toys for children?

AI toys routinely record children's voices and transmit data to remote servers. This can include names, preferences, voiceprints, facial scans, and emotional states, stored for up to three years and shared with unnamed third parties. Most parents have no actionable information about how their children's data is collected or used.

How do AI toys affect children's emotional development?

Cambridge's PEDAL Centre found that AI toys misread children's emotions and fail to engage in pretend play. When a child said 'I'm sad,' one toy responded with cheerful deflection, potentially signalling that the child's feelings were unimportant. These misalignments may disrupt early social and emotional learning.

Can AI toys operate without sending data to external servers?

Yes. Edge AI architecture allows models to run locally on the device itself, with no data leaving the child's hands. Empathy AI's 'Bring the Story to Life' experience demonstrates this: the child's voice is never recorded or transmitted, and no third-party servers are involved.

What did the Cambridge PEDAL Centre study find about AI toys?

The study, led by Dr Emily Goodacre and Professor Jenny Gibson, observed 14 children under five interacting with a generative AI toy. Researchers documented consistent misalignment: failed emotional recognition, inability to support pretend play, and scripted responses to genuine affection.

What is the 'Bring the Story to Life' experience from Empathy AI?

'Bring the Story to Life' is an assembly kit where children and educators build an AI voice device using a Raspberry Pi, microphone, speaker, and a curated AI model. It runs on texts from Project Gutenberg's catalogue, processes everything locally, and teaches children that AI is a governed system, not a companion.

Are current safety standards adequate for AI-powered children's toys?

No. Research from Cambridge's PEDAL Centre and the US PIRG Education Fund shows that safety guardrails in AI toys routinely fail during extended use. Manufacturers like Mattel are already partnering with OpenAI to expand generative AI in toys, while child safety research is only beginning.